Things I want to do

We will run LLM (chat AI) locally with image input using llama.cpp.

This article uses Qwen2.5-VL, Google’s local model.

It can run on AMD GPUs as well as on systems without a GPU (CPU).

Please refer to the following page for instructions on how to start Gamma.

environment setup

llama.cpp

Download the appropriate Zip file for your environment from the following page.

If you want to run it on Windows with an AMD GPU (or a system without a GPU), you can use the Vulkan package.

When using an Nvidia GPU, it will work with the CUDA package.

If it doesn’t work with the above version, use the CPU-optimized package.

Once you’ve extracted the downloaded file to a folder of your choice, you’re ready to go.

Model

Download two files from the following pages: one from Qwen2.5-VL-3B-Instruct-XXXXXXX.gguf and one from mmproj-Qwen2.5-VL-3B-Instruct-XXXXXXX.gguf.

execution

Run on the server

Execute the following command in the command prompt.

Model pathReplace with the path to the downloaded model.

llama-server -m Model path --mmproj Path of the mmproj model --port 8080

Once the model has finished loading, it will be displayed as follows:

main: model loaded

main: server is listening on http://127.0.0.1:8080

main: starting the main loop...

srv update_slots: all slots are idle

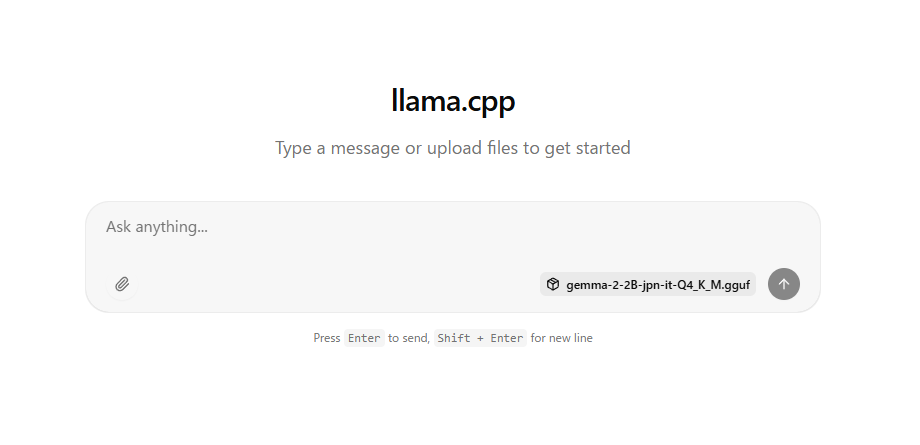

If the above message appears, open http://127.0.0.1:8080/ in a browser such as Chrome.

The following will be displayed, allowing you to chat.

You can drag and drop image files onto the page.

troubleshooting

When I entered an image, an AMD error report appeared and the program crashed.

I resolved the issue by doing the following two things. (I’m not sure which was the cause.)

1. Update the driver from the following page.

2. Launch AMD Software (Adrenalin Edition) Change the ‘Memory Optimizer’ setting in the ‘Performance’ → ‘Tuning’ tab to ‘Gaming’. (This increased the GPU’s memory usage from 2GB to 4GB.)

Websites I used as references

コメント